Research

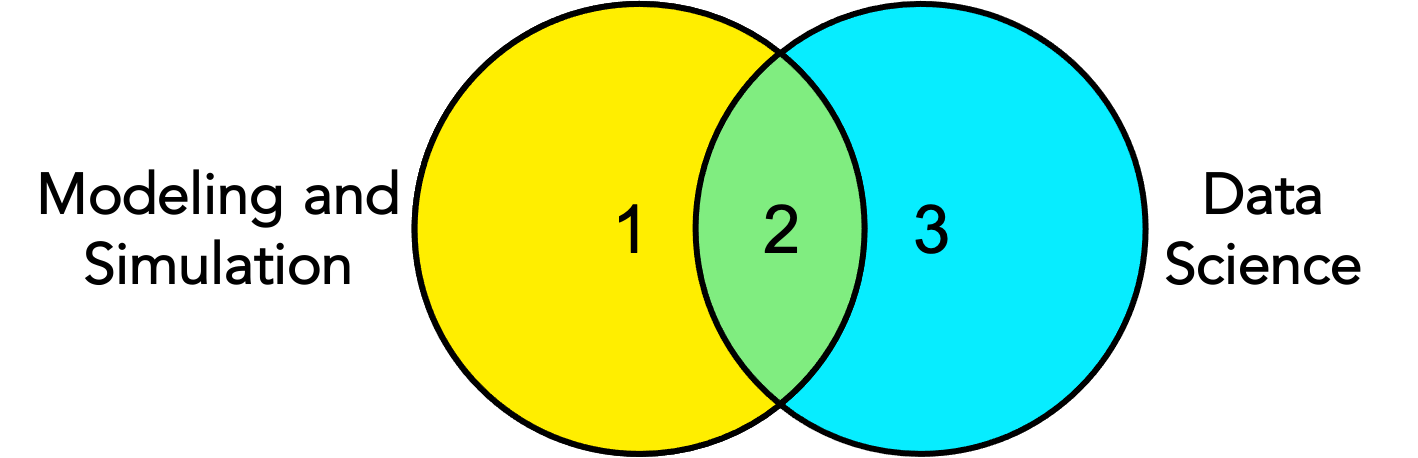

My research involves two related areas: Modeling & Simulation (M&S) and Data Science. If we describe this relationship as a Venn diagram, as shown below, my primary research efforts focus on areas 1 and 2. On the M&S-focused research side (area 1), I tackle challenges related to core M&S topics, including verification and validation, conceptual modeling, and M&S tools. My second prominent focus is on using Data Science for M&S (area 2). Particularly, I design and use data-driven simulations, conduct simulation output analytics, and use emerging machine learning techniques in different steps of the M&S process. While limited, my data science-only focused research (area 3) involves creating and using data science techniques (e.g., machine learning) to solve problems in different domains. Cybersecurity and urban science are the main application domains for my research.

Last updated on Oct 27, 2024.

Here is a list of research projects that I am/was involved as a participant, mentor, or lead and are highlighted according to the schema colors above. Click on the title to see the details.

Legend: new ongoing completed

Data-Driven Modeling of Agents

Data-driven Mobility Modeling for COVID-19 Simulation

Verification and Validation

Verification and Validation Framework for COVID-19 Models

The Future of Agent-Based Model Verification and Validation

Verification and Validation as a Service

Simulation Data and Analytics

Reusable Synthetic Population Data

Past/Completed Research Projects

Data-Driven Modeling of Agents

Data-Driven ABM Methodology and Applications

Social Media Analytics

Anti-American Misinformation/Disinformation Efforts in Social and Mass Media

Predicting People’s Home Location From Sparse Footprints

Ever wondered how tourists feel in their attraction visits?

Human Mobility Prediction and Analysis

Foot Traffic Prediction

Change of Human Mobility During COVID-19

Modeling and Simulation of Social Systems

DARPA Urban Life Model

How Modelers Develop Models?

Contributions to Simulation of Cybersecurity

Verification and Validation

Methodological Contributions to Simulation Verification and Validation (Pre 2021)

Modeling and Simulation Practice

Web-based simulations and tools

Here some simulations written in JavaScript.

- Segregation Simulation : Demo – Source Code

- Flocking Simulation: Demo – Source Code

- Google Maps Polygon Extraction Tool: Demo